If you work in tech, you hear the word “data” quite often.

Data breach. Big data. Selling data. Data science.

What is data really, and is it the same in all these contexts? What do you need to know about data, as a non-engineer?

Our next few posts will answer these questions, starting today with a discussion of big data!

But first, what is data really...

Data is structured information

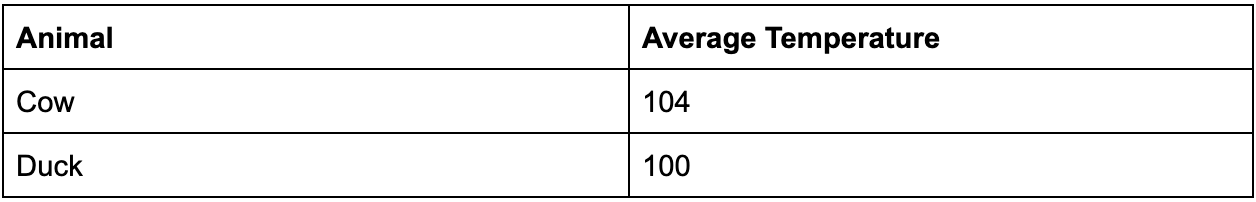

Here is some information:

“The average body temperature of a cow is 104 degrees F.”

Here is that information as data:

Here’s another great nugget of information

“A duck, even while standing on ice, maintains a body temperature of 100 degrees”

Here’s our new data:

Data is what you see in a spreadsheet. It’s a set of values with labels.

It’s hard for a computer to understand the kinds of sentences shown above. However it’s easy for a computer to understand and use information that is structured as data.

What is big data?

Big data has become something of a buzzword, and thus is applied to lots of different things. However, most of the time when someone talks about big data they simply mean the same kind of structured information you see above, but enough of it that you can’t process it on one computer.

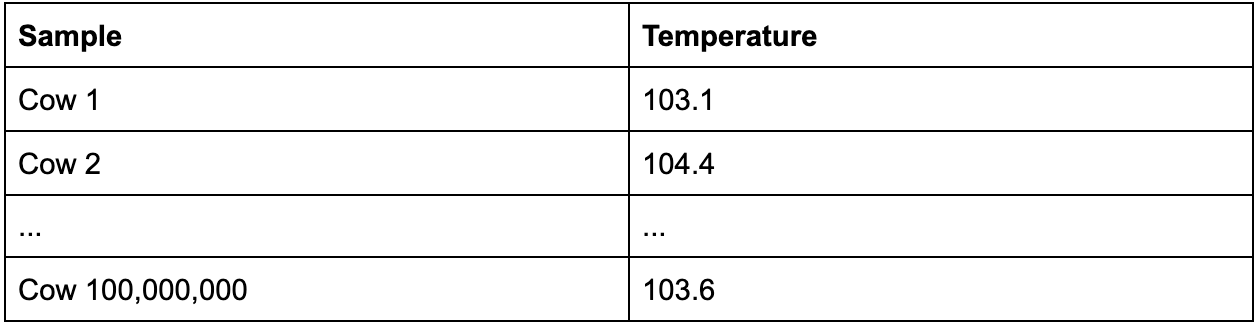

Here is some big data:

This data table could be used to answer the question “what is the average temperature of a cow”, without needing to trust Google. The reason big data has become so popular is because often you can make more trustworthy conclusions if you have more data.

Whether or not you need “big” data depends on the conclusions you want to make. In the case of cows you can probably make a solid estimate of average temperature with just 100 cows. This is because the variation in cow temperature is small, among other things. So sampling 100M cows would be overkill.

However, if you wanted to determine the correlation between urban living and happiness, you’d need more data, maybe 10K samples.

If you wanted to compare two ads to see which one is more effective at driving a purchase 3 months later, you might need 1M samples.

How big is big?

First off, the “Big” in Big Data doesn’t refer to the amount of data being stored, it refers to the amount of data being processed.

For instance, there are about 500M tweets sent per day. Believe it or not, there are off-the-shelf databases that can handle this kind of traffic. As long as you don’t need to process tweets and compare them to each other, you can use an existing database like Firebase.

However, If you wanted to process all the tweets coming in to determine the most popular topics, things are harder. When you show a user their list of tweets, you only need to read 20 tweets at once. When you want to determine the most popular topics being tweeted, you need to read and compare millions of tweets. This is where normal data becomes big data.

Data lakes, warehouses and other big data related tech make it easy to process large amounts of data at the same time. They store data across many servers, and all the servers can process the data they have access to in parallel. When I was at Twitter, I wrote code that determined how popular different celebrities were on the platform. It processed millions of tweets per second, and it used lots of big data technology.

How hard is big data today?

Like everything software related, processing large amounts of data gets easier every year. At this point, big data is less about engineering than it is about knowing the third party technology that will serve your needs. If you need to store and process big data, it can really help to have someone who has experience building queries with Tableau or Looker, storing data in Big Query or Snowflake, and building pipelines with tools like Stitch.

That being said, the hardest part of big data is not tech, it’s getting the data in the first place! Analyzing 500M tweets is easier than ever. Getting people to tweet 500M times a day is hard, and no Data Lake will help with that.

Do I need Big Data?

If you think you need a Big Data stack, you’re not alone— most of my clients bring that up as a requirement when I do technical consulting. There is so much written about Big Data that it’s easy to buy into the hype, however 95% of my clients don’t collect or process enough data to warrant specialized investments. Furthermore, even if your business model is such that you will one day need big data, preparing now helps you little and wastes engineering time. At the point that you need to process more data than can fit on one server, you can easily move your data into a system that is designed for this.

That being said, if you meet the following criteria you should look into big data tools right now:

You are processing data, not just storing it. If you have 100M users using your platform, you can do that with any old database. However, if you need to process information related to all those users, then you’ll need Big Data tech.

You are processing lots of data.If you want to process 100K rows of data, you can use a normal database and processing techniques. If you need to process 1M+ rows of data, or are processing things that take a long time, like images, then you may need something special.

Start Small

I mentioned above that the hardest part of big data is getting the data. Well, the second hardest part is knowing what data to get. I’ve been on more than one project where we set up complex data architectures, collected data, ran our analyses, then realized that we weren’t collecting the data we needed to make the conclusions we wanted. For instance, one time, at Twitter, we launched a feature which we hoped would get more people to press the “Send DM” button on a user’s profile page. We spent weeks setting up an experiment only to realize post-launch that while we were measuring the button press, we weren’t measuring how many DMs were actually sent, which was what we really cared about. We had to redo the whole experiment.

It’s always best to start with small datasets— you can even do your calculations in a spreadsheet. Focus on analysis instead of getting lost in implementation. Then if your results are as expected at a small scale, go big.

Conclusion

Although “Big Data” has become a buzzword, it’s not all that different from small data. If you understand spreadsheets, then you should be able to understand when engineers talk about Big Data. In fact, many of the Data Leaders I know aren’t even engineers! They often come from finance backgrounds and picked up their technical knowledge along the way. Though there are some technical components to Big Data, the most challenging aspects are determining what and how to measure, which can benefit greatly from someone with a non-engineering background.

Check back in a week or two for a post on selling data, data security, and data breaches.